A Style-Aware Content Loss for Real-time HD Style Transfer

Artsiom Sanakoyeu* Dmytro Kotovenko* Sabine Lang Björn Ommer

Heidelberg University

In ECCV 2018 (Oral)

Paper | Supplementary| Code

Abstract

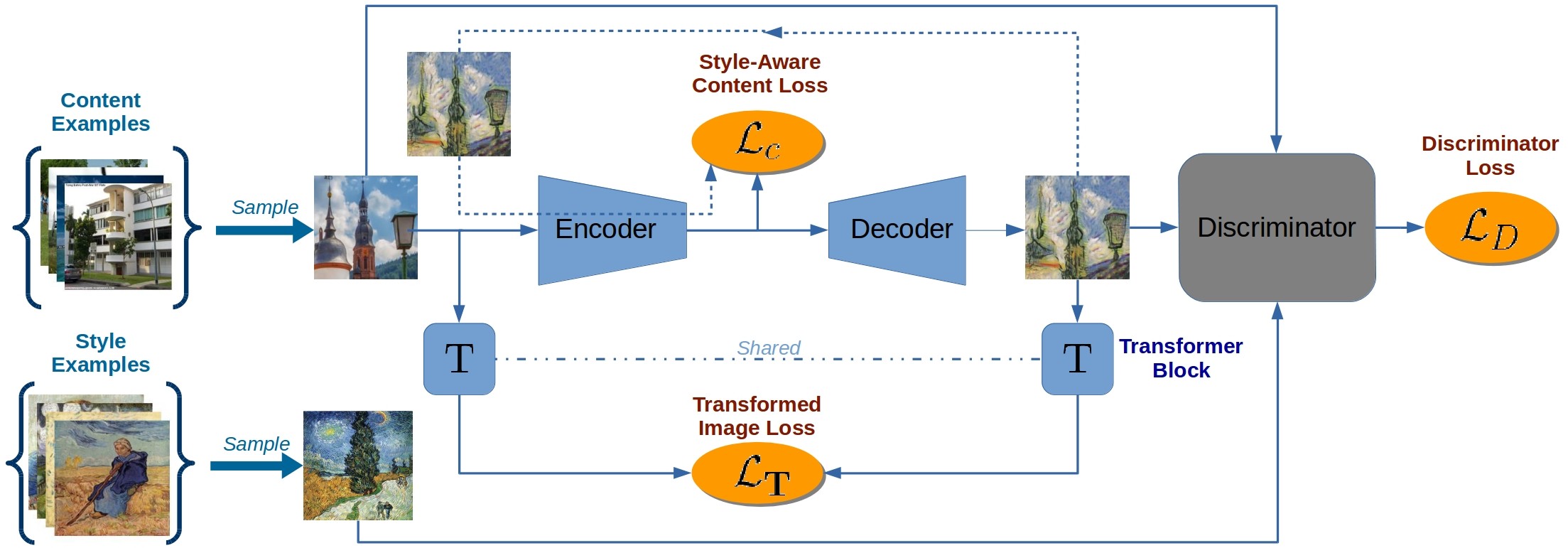

Recently, style transfer has received a lot of attention. While much of this research has aimed at speeding up processing, the approaches are still lacking from a principled, art historical standpoint: a style is more than just a single image or an artist, but previous work is limited to only a single instance of a style or shows no benefit from more images. Moreover, previous work has relied on a direct comparison of art in the domain of RGB images or on CNNs pre-trained on ImageNet, which requires millions of labeled object bounding boxes and can introduce an extra bias, since it has been assembled without artistic consideration. To circumvent these issues, we propose a style-aware content loss, which is trained jointly with a deep encoder-decoder network for real-time, high-resolution stylization of images and videos. We propose a quantitative measure for evaluating the quality of a stylized image and also have art historians rank patches from our approach against those from previous work. These and our qualitative results ranging from small image patches to megapixel stylistic images and videos show that our approach better captures the subtle nature in which a style affects content.

Paper

arxiv 1807.10201, 2018.

Citation

Artsiom Sanakoyeu*, Dmytro Kotovenko*, Sabine Lang, Björn Ommer.

"A Style-Aware Content Loss for Real-time HD Style Transfer", in European Conference on Computer Vision (ECCV),

2018.

(* indicates equal contributions)

Bibtex

Code and models: TensorFlow

Supplementary: supplementary_material.zip

Slides: slides.pdf

Poster: poster.pdf

ECCV Oral Talk

Two Minute Papers Overview

Extra experiments

Real-time HD video stylization

We also apply our method to video stylization. For example, our approach can stylize HD video (1280x720) at 9 FPS. We did not use any temporal regularization to show that our method produces equally good results for consecutive frames with varying appearance w/o extra constraints.

"The horse in motion", 1878, in style of Picasso

"Aix-en-Provence, France" video, 1878.

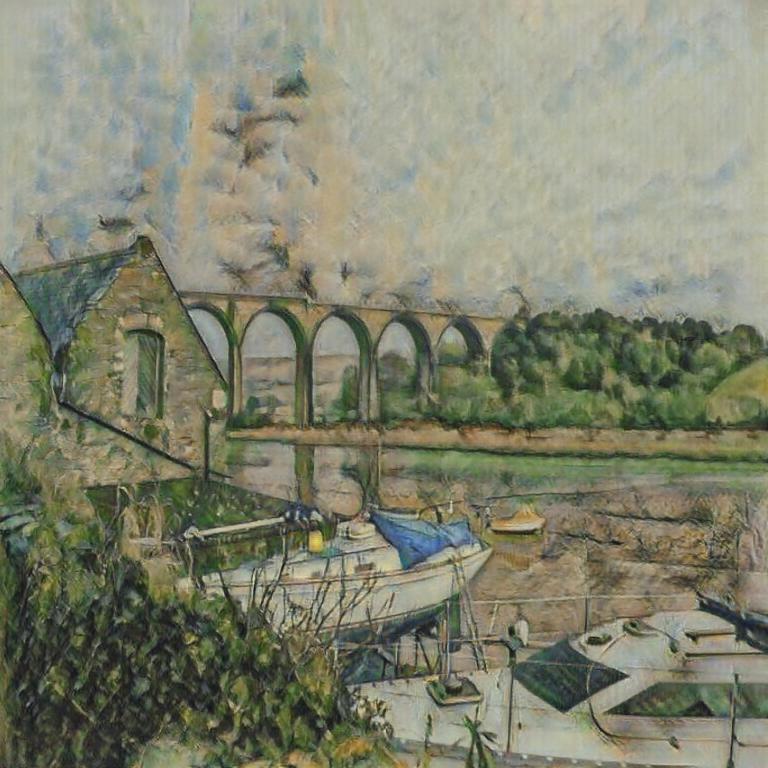

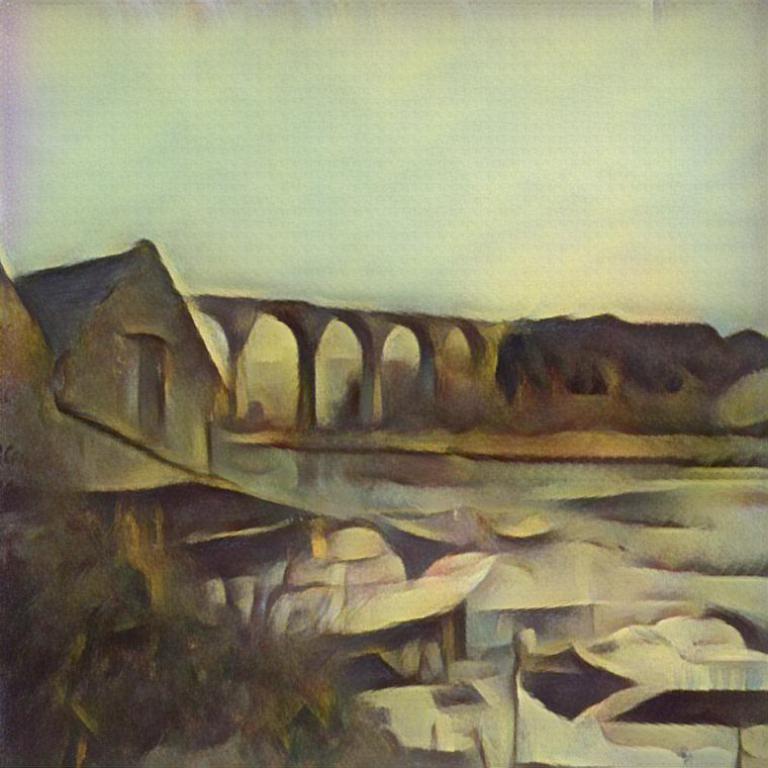

In the clockwise order: Content, stylization for Cézanne, Picasso and Kandinsky.

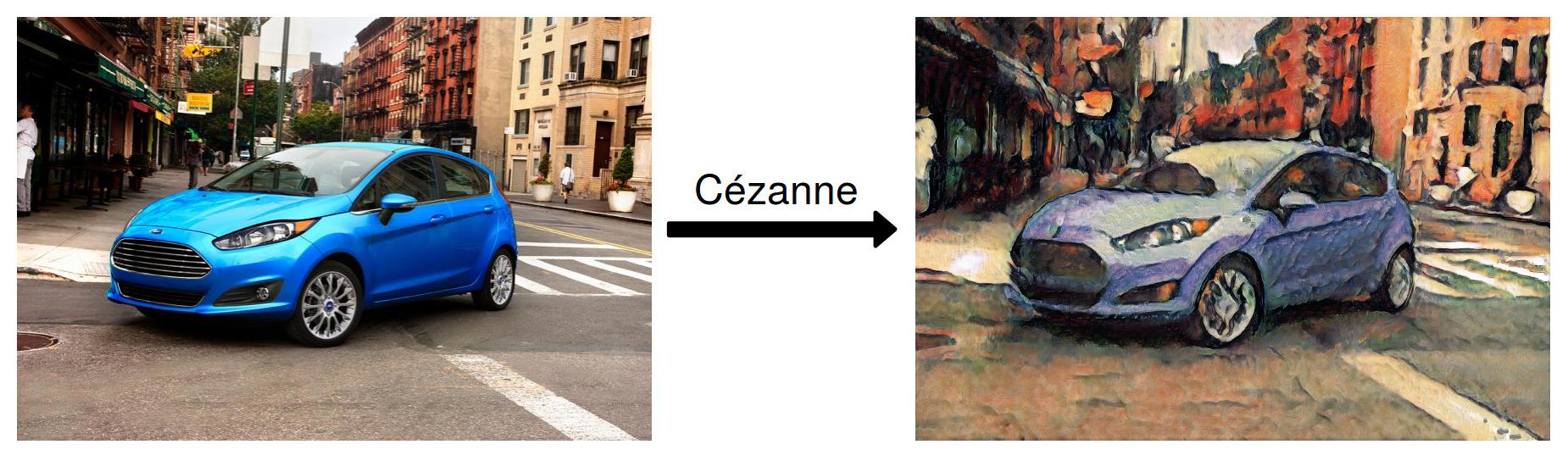

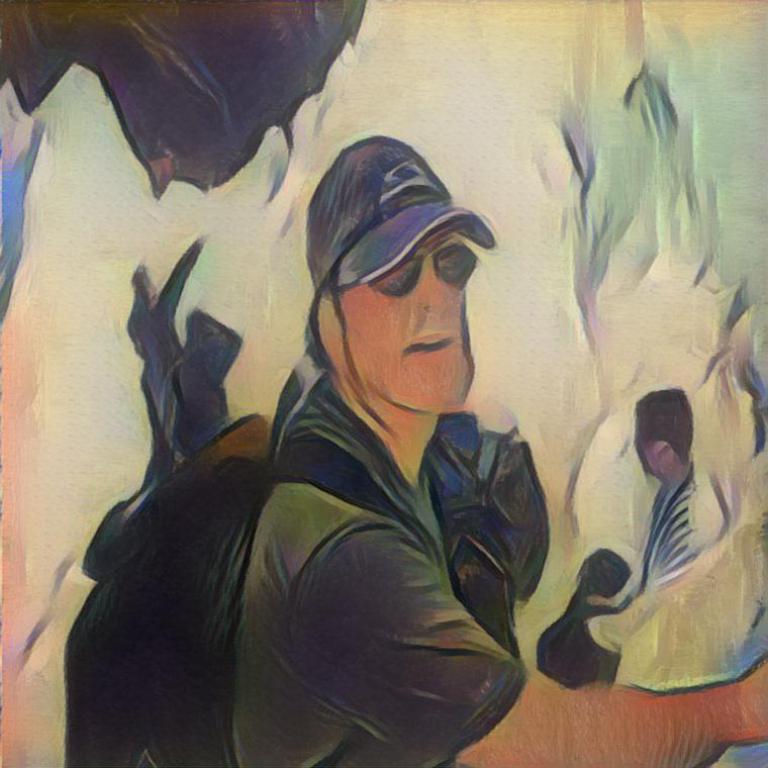

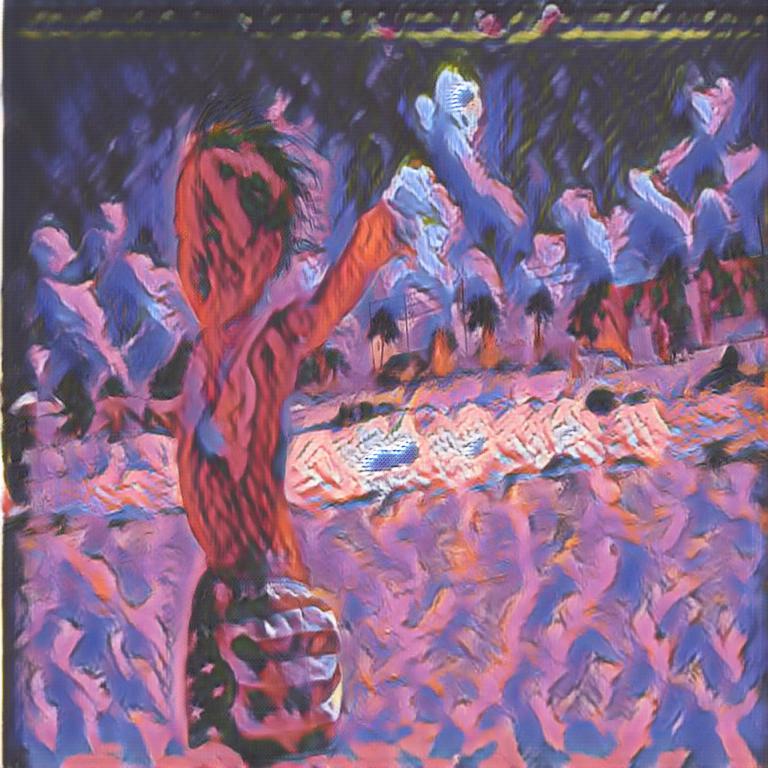

Single content in different styles |

|

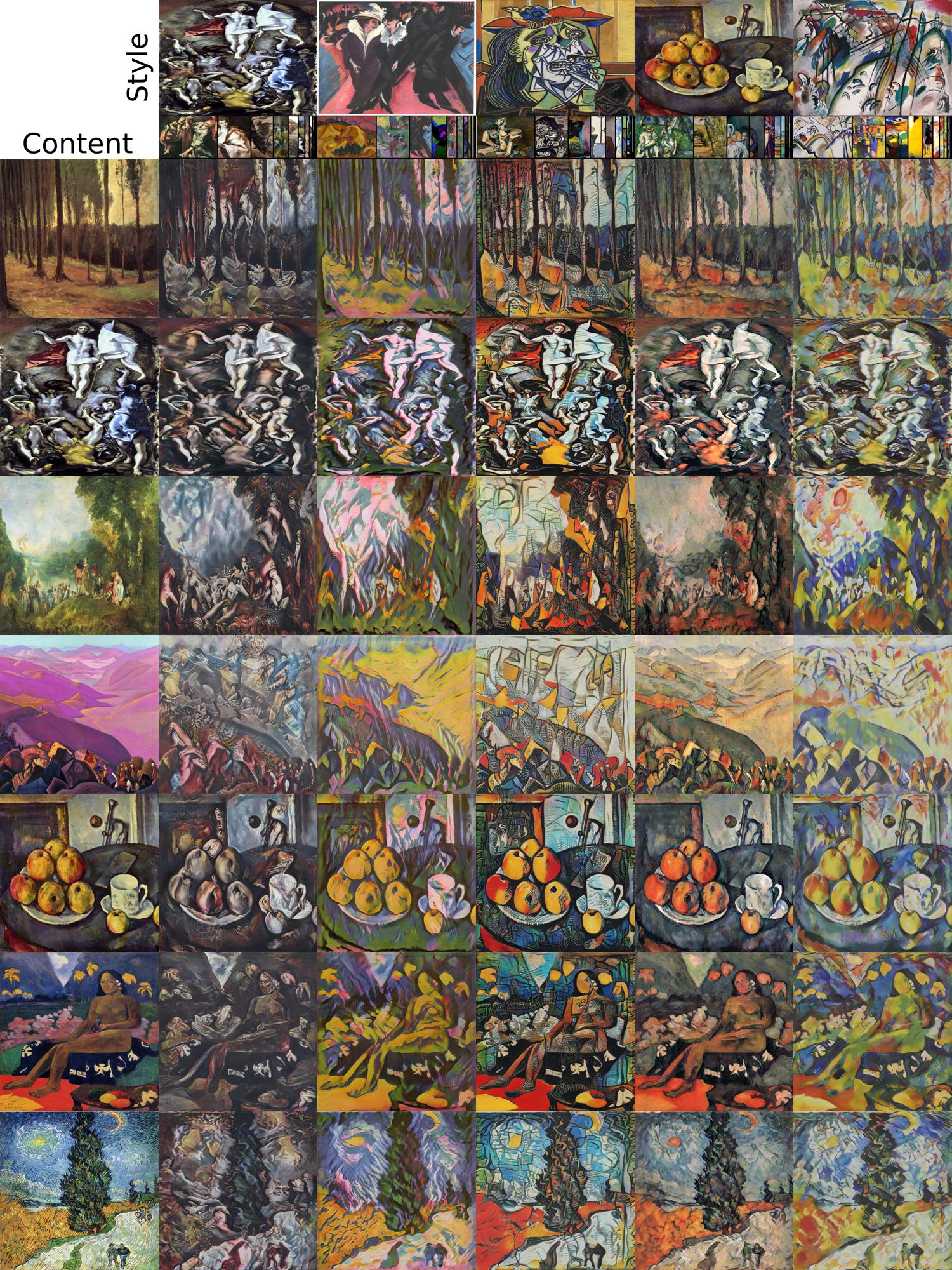

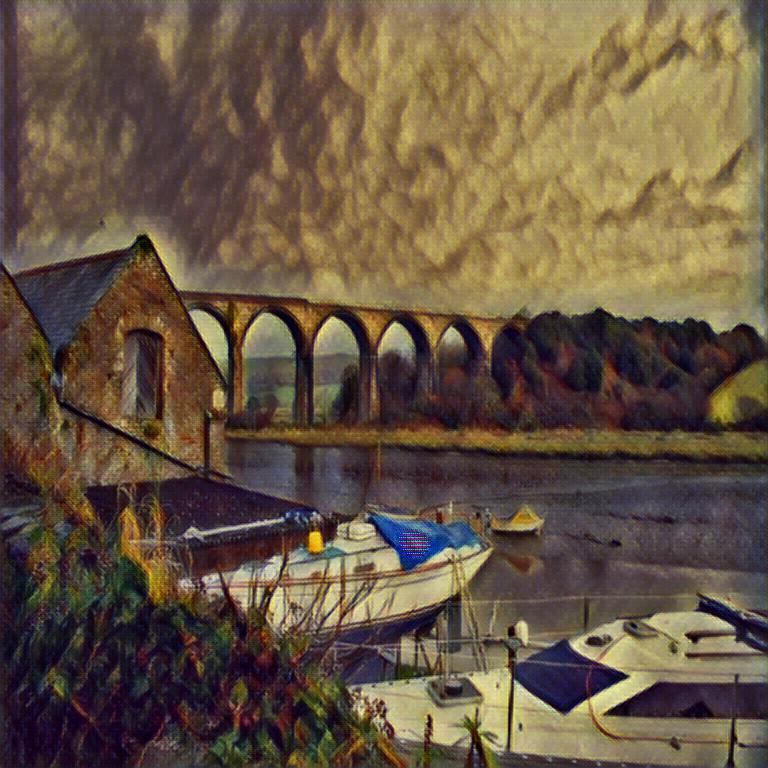

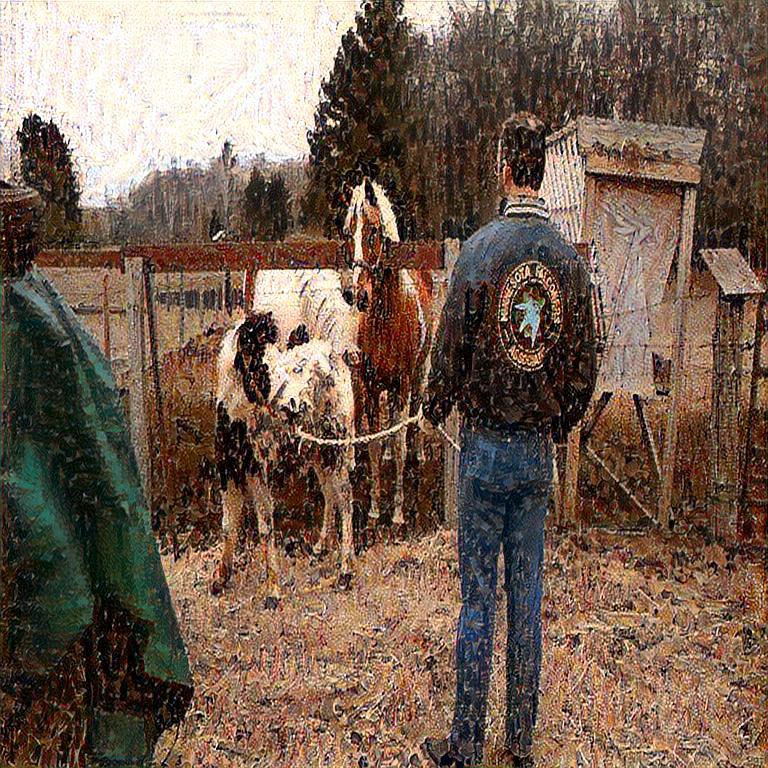

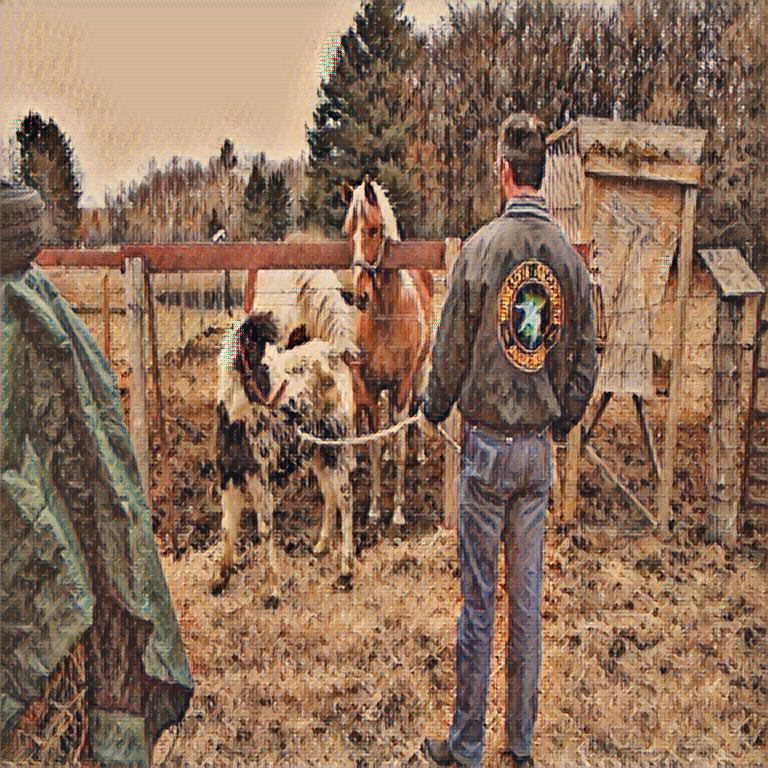

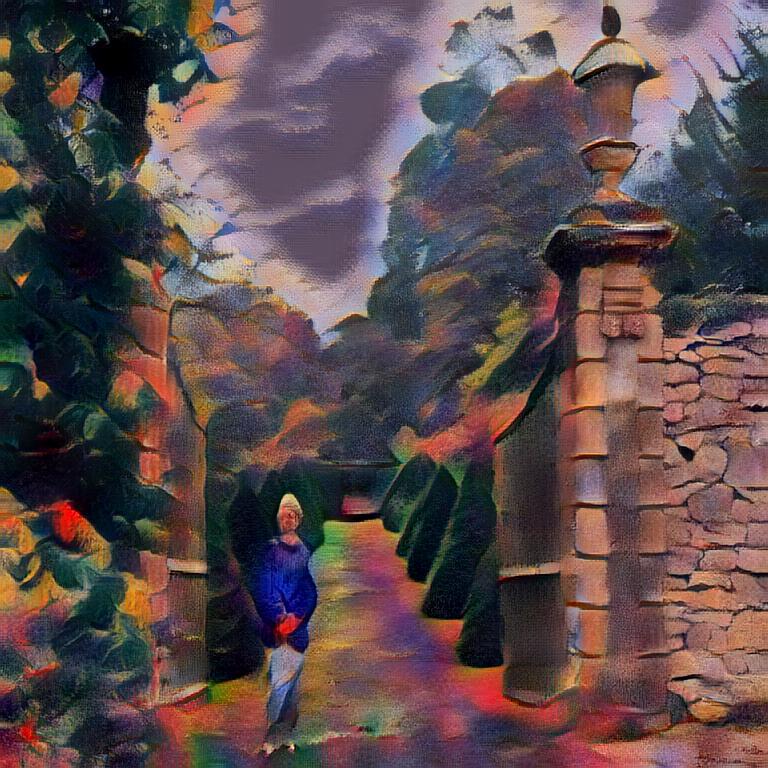

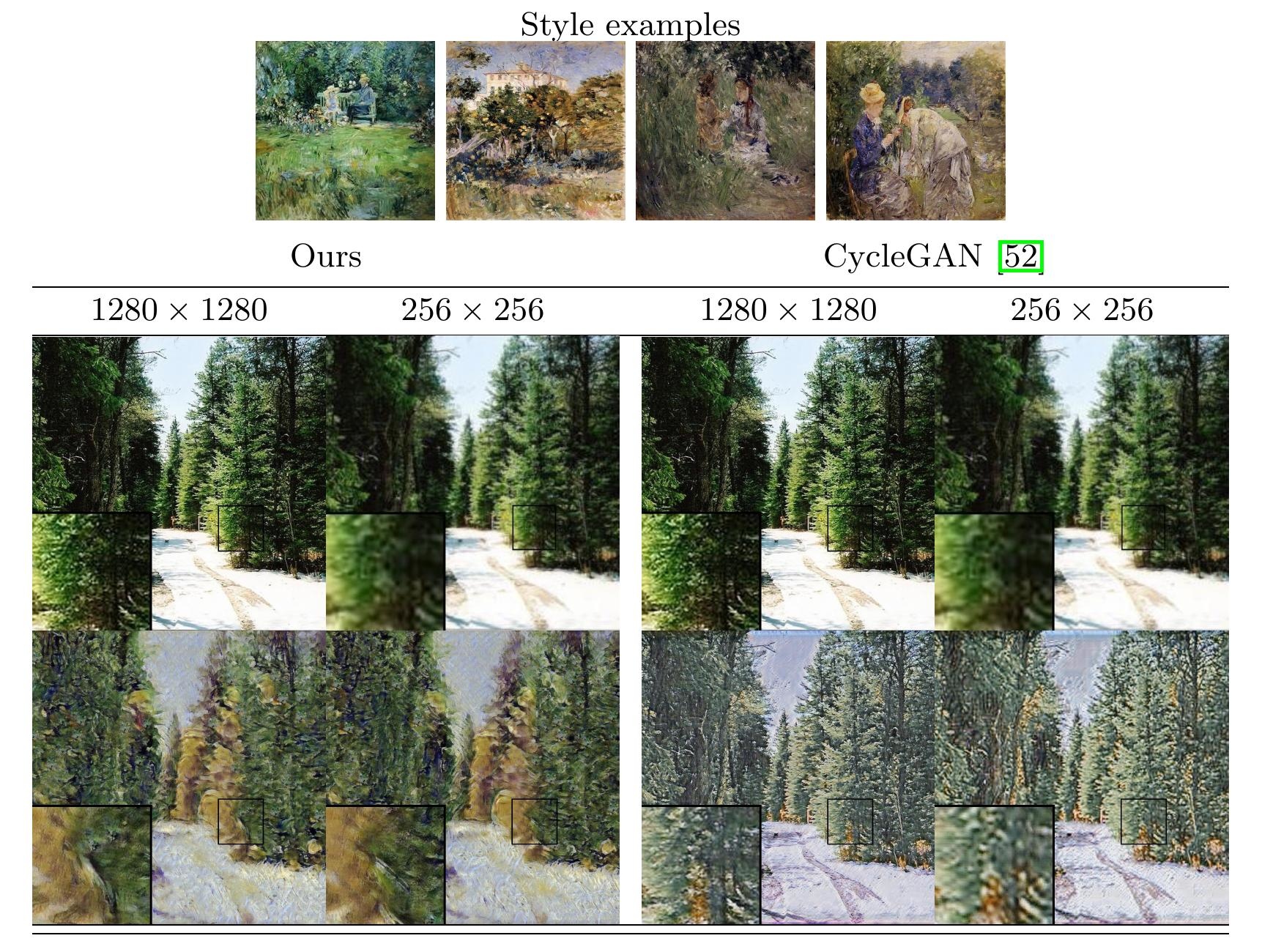

Comparison against previous style transfer methods

|

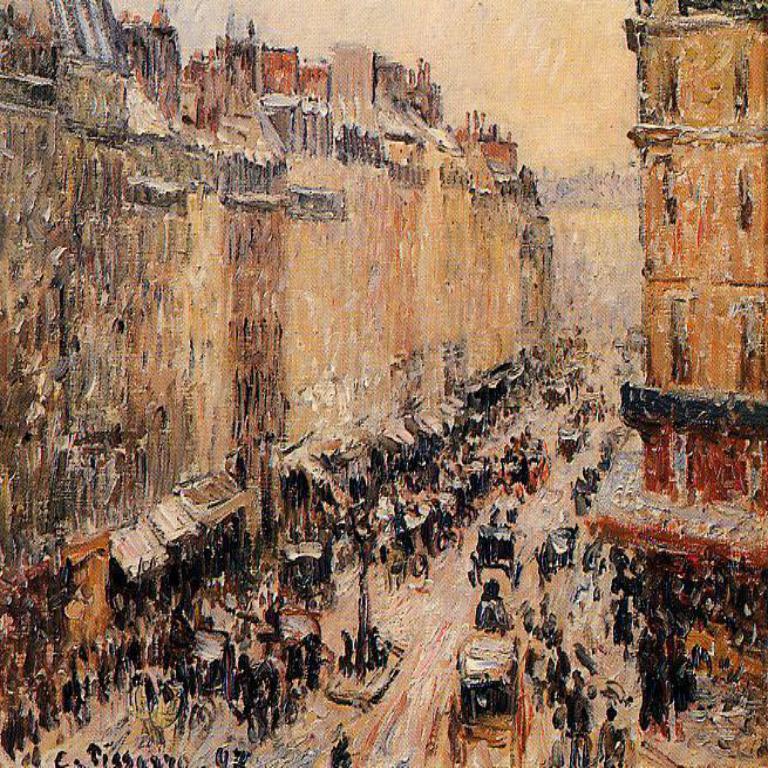

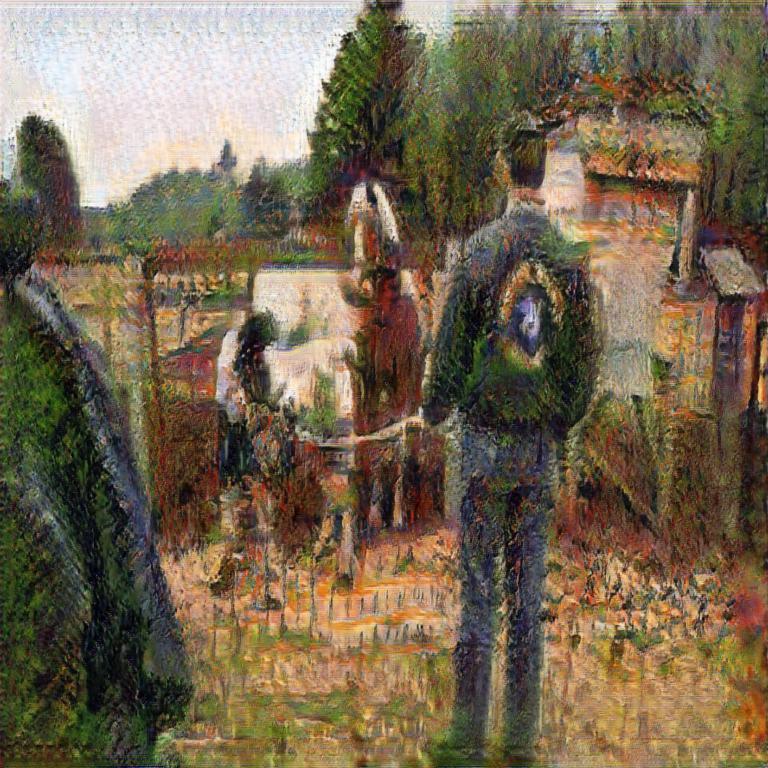

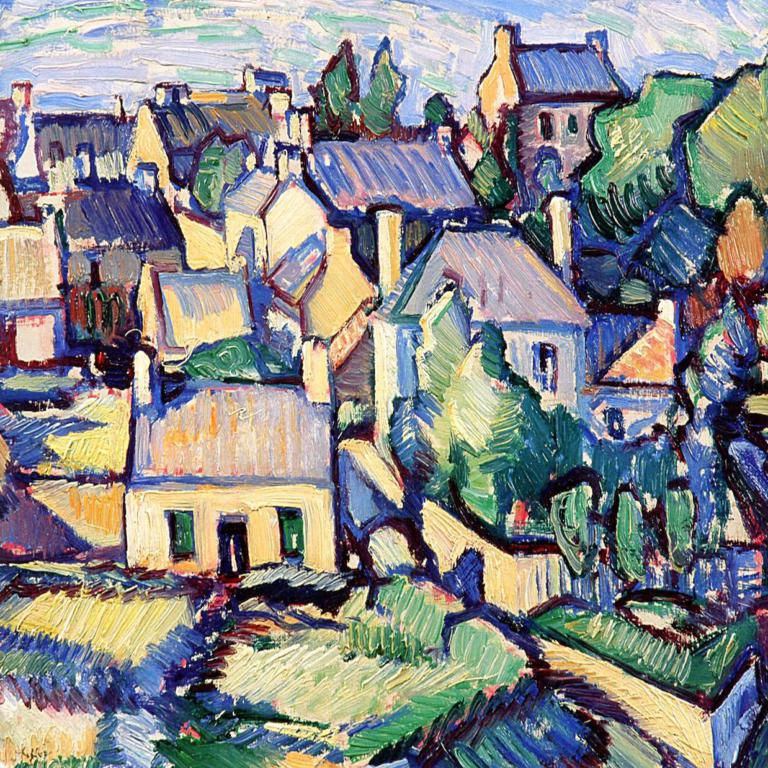

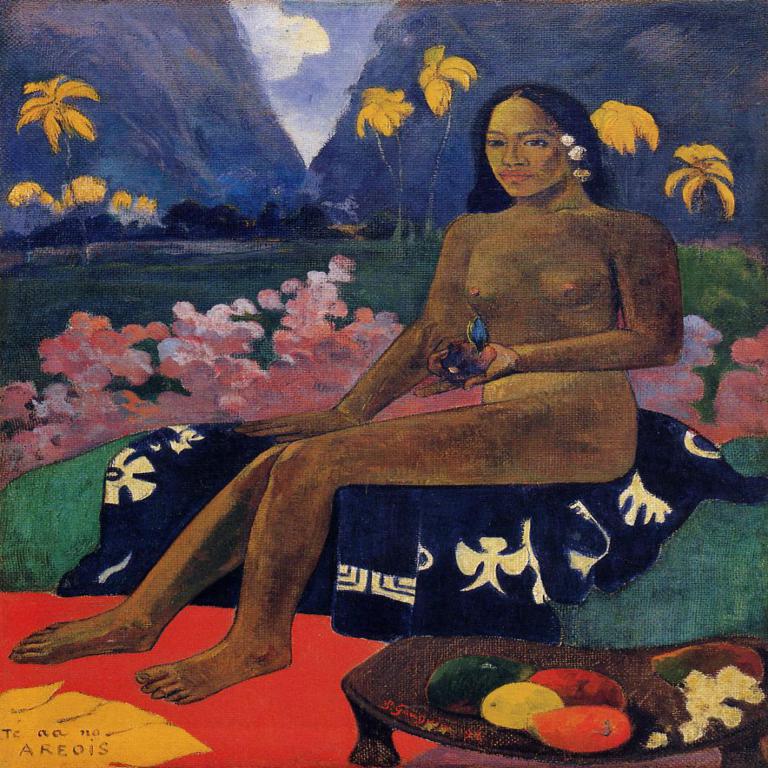

Style |

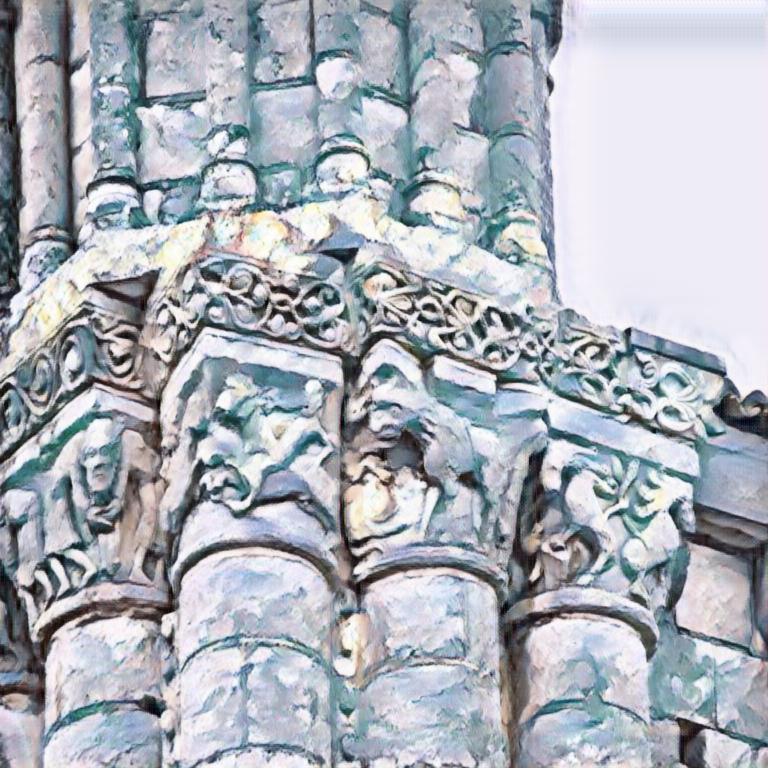

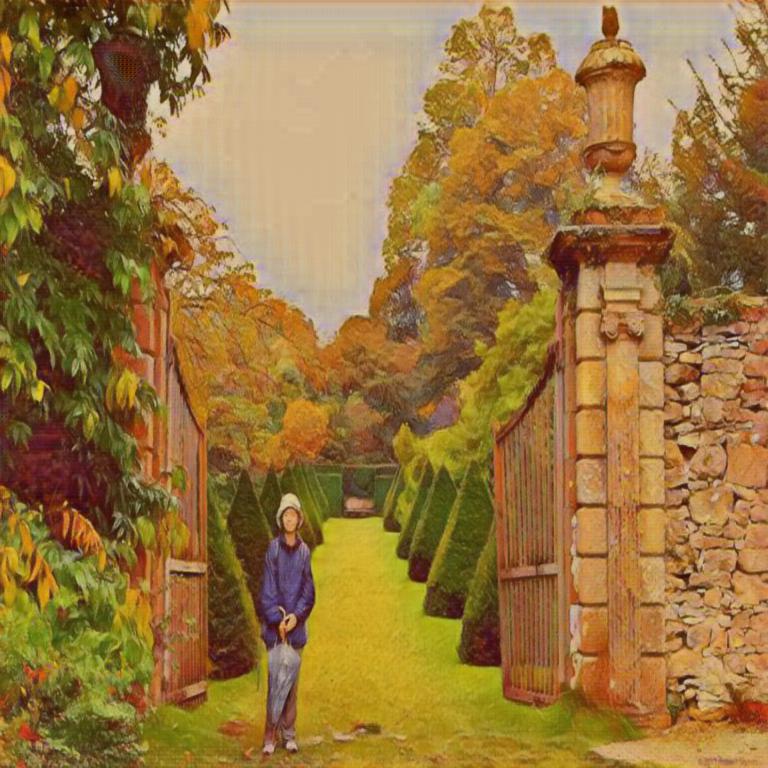

Content |

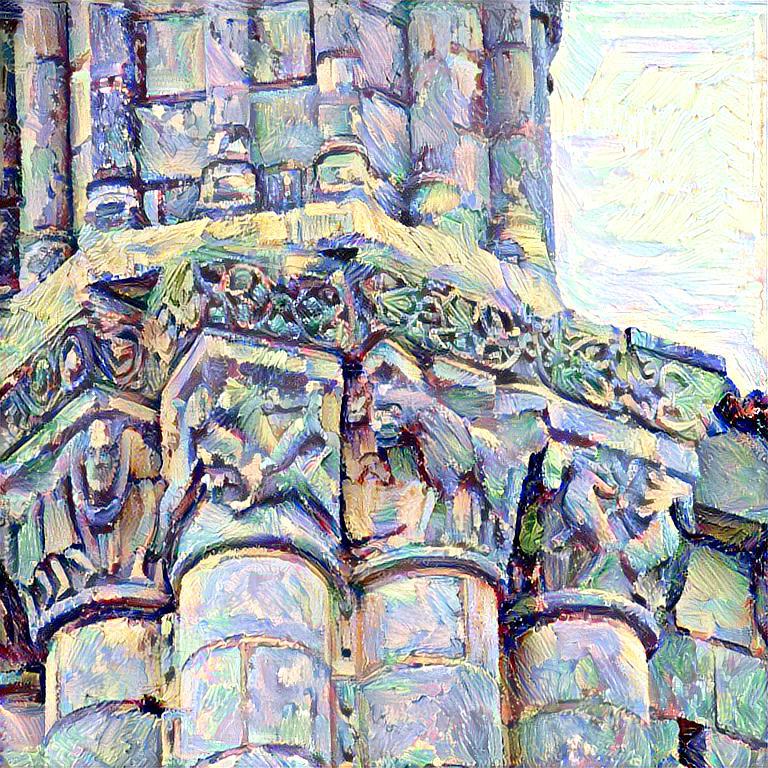

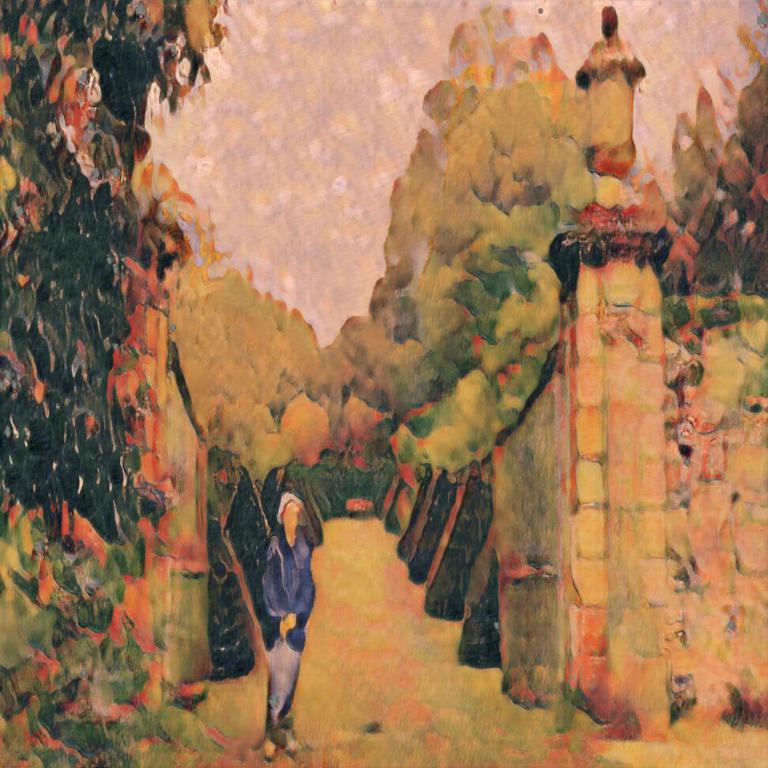

Ours |

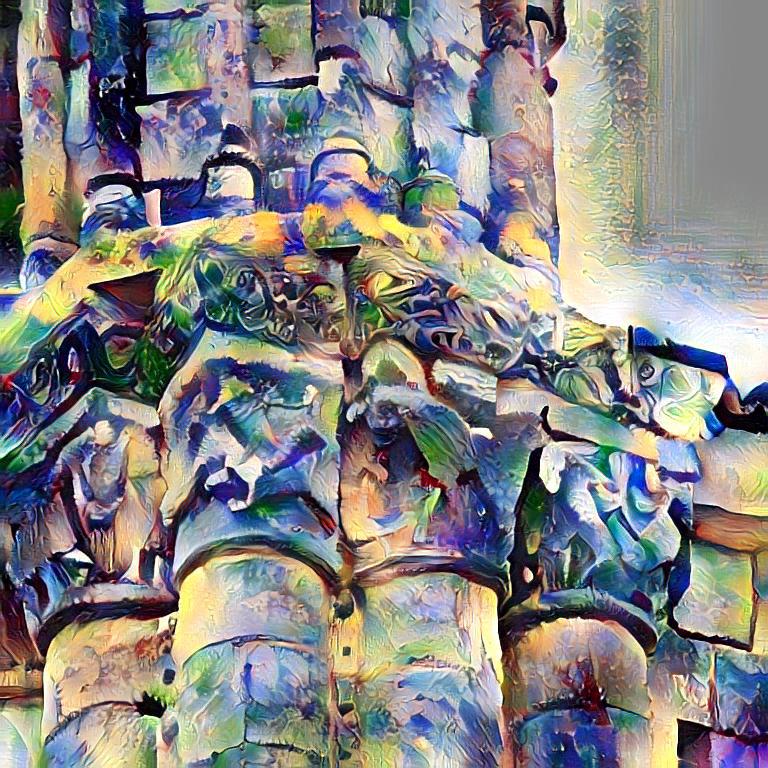

Gatys et al. [1] |

CycleGAN [2] |

PatchBased [3] |

AdaIn [4] |

WCT [5] |

Johnson et al. [6] |

|---|---|---|---|---|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

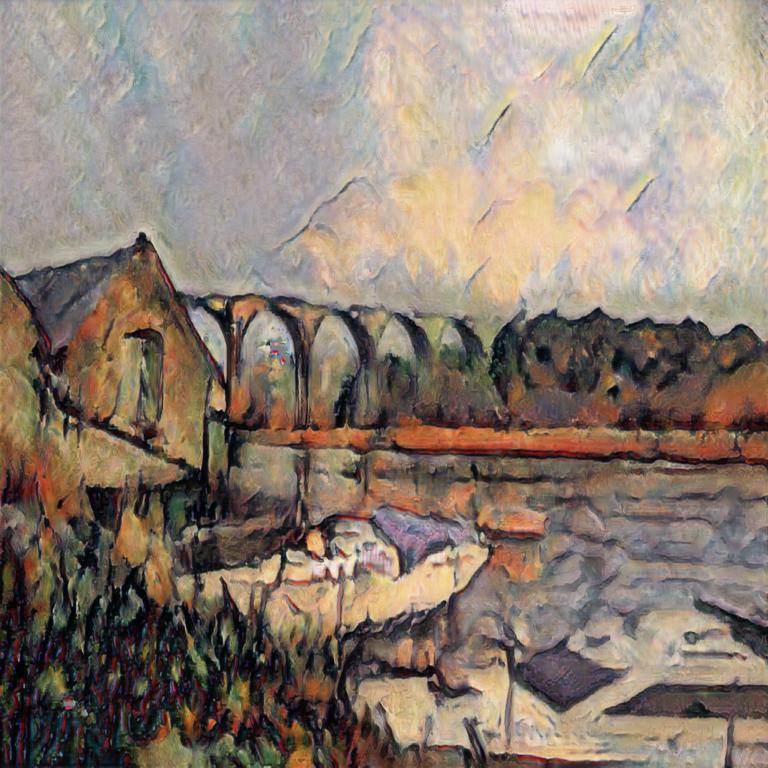

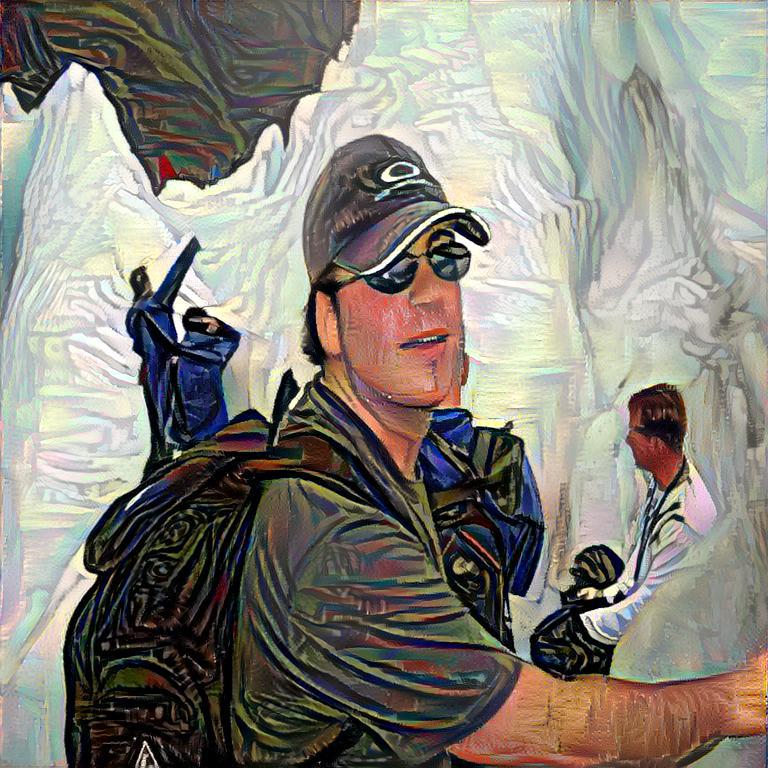

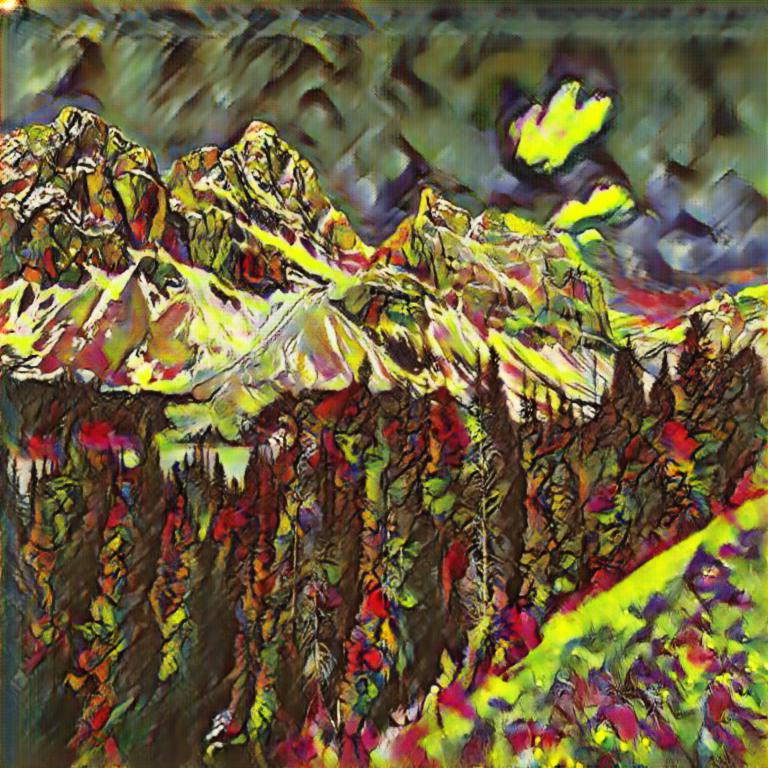

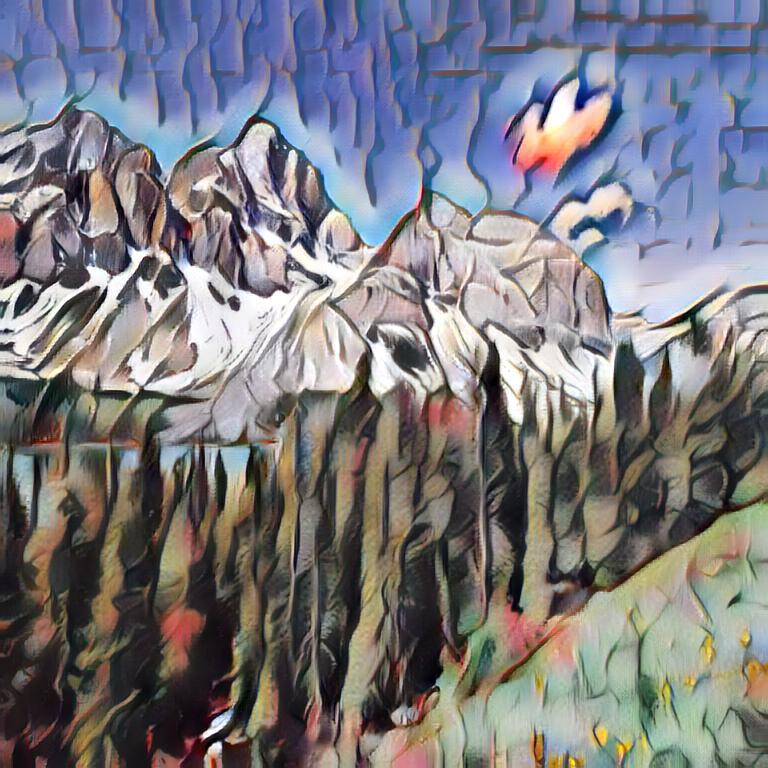

More stylizations in HR |

|

Claude Monet, 1712×1280 pix

|

High-resolution stylization from low-resolution content

All Results |

|

References

[1] Leon Gatys, Alexander Ecker, Matthias Bethge "Image style transfer using convolutional neural networks", in CVPR 2016.[2] Jun-Yan Zhu, Taesung Park, Phillip Isola, Alexei A. Efros "Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks", in ICCV 2017.

[3] Tian Qi Chen, Mark Schmidt "Fast patch-based style transfer of arbitrary style", arXiv:1612.04337

[4] Xun Huang, Serge Belongie "Arbitrary Style Transfer in Real-time with Adaptive Instance Normalization", in ICCV 2017.

[5] Li, Y., Fang, C., Yang, J., Wang, Z., Lu, X., Yang, M.H. "Universal style transfer via feature transforms", in NIPS 2017.

[6] Justin Johnson, Alexandre Alahi, Li Fei-Fei "Perceptual losses for real-time style transfer and super-resolution", in ECCV 2016.